In the past couple of weeks, I’ve built and tested my new workstation PC. My previous workstation was a Dell Precision T5600 (built circa 2012) with a 6-core Xeon, 24GB of RAM, AMD V5900 GPU, and 120GB SSD. The performance was still fine despite it being nearly a decade old, but it had two issues: It was looooooouuuud, and very power-hungry (200w at idle). So, it was time to be replaced.

Many moons ago I used to work in a computer store, so I’m used to building my own machines. Why didn’t I last time? Because I needed something in a hurry, and somebody had their old workstation for sale on Gumtree, and it seemed like a good deal (and for the most part, it was).

My requirements for the new build were as follows:

- Silence at idle is a must. The fans can be audible, but not annoying, under load.

- 16GB of RAM at least, with the capability of holding at least 32GB in the future.

- On that note, it should be somewhat future-proof. I should be able to upgrade the motherboard, case, and storage independently of each other (something I couldn’t do with the Dell).

- It has to look good sitting on my desk (completely subjective, I know).

- At least 8 threads for compiling software with, but the option of replacing the CPU in the future.

- Support for three 1920×1080 DisplayPort monitors.

And the parts I have chosen to make all this happen?

- Case: Fractal Design Era ITX

- PSU: Corsair SF450

- Motherboard: ASUS ROG Strix B460-I

- CPU: Intel Core i3 10100

- RAM: G.Skill Ripjaws V 16GB (1x16GB) 3200MHz

- SSD: Western Digital Black SN750 500GB

All up, the cost of the parts was around $1000 AUD.

One thing I found quite annoying picking the parts for this machine was that the vast majority of available performance parts were gaming-oriented. RGB lights plastered on everything and designed to look like something that has fallen off an army truck (which ironically does everything they can to avoid having RGB lights). In addition, most of the reviews online, both written and YouTube videos, were written from the stance of a gamer.

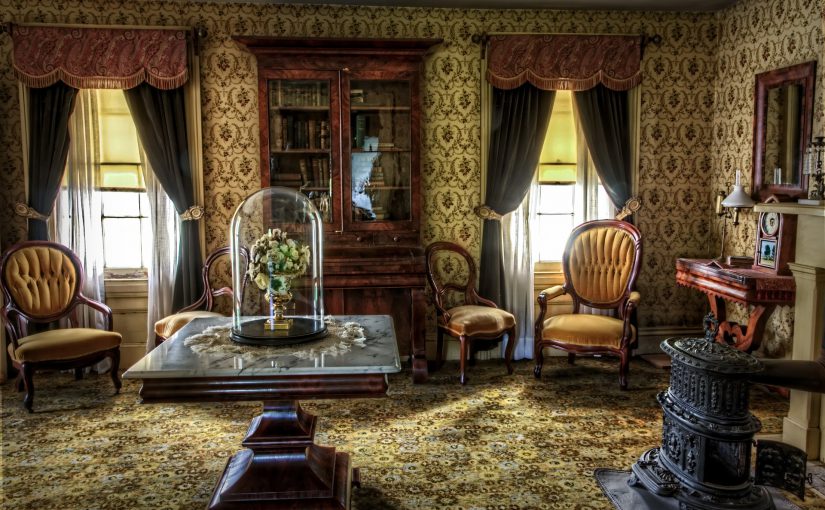

Take for example the Fractal Design Era case. Gamers hate this case as it has terrible cooling for the GPU. It’s a legitimate issue sure, but one only faced by gamers. If you don’t have a discrete graphics card in the system, then you don’t have this issue and the case is thermally fine. But the positive trade-off from that thermal design in the GPU area is that it’s an ITX case that looks like it belongs in a modern art museum. Look at it. It’s beautiful!

My other part choices are pretty standard. An i3 is more than enough (it’s got as much power as my old Xeon), 16GB of RAM is plenty (8GB would have been fine if not for Microsoft Teams), and 500GB of SSD boot drive (plus a re-used 4TB drive for storage) is fantastic and surprisingly cheap.

Did I meet my requirements? Yes. It’s silent. It only consumes around 25w at idle and around 150w under full load. I consider that a huge win. The new machine would pay for itself in 3 years with power savings alone. All the fans are zero-RPM enabled and will turn off under idle conditions.

It’s also future-proof. I’ve learned the hard way in my last two computer purchases (the Precision workstation mentioned above, and a Dell XPS 9350 laptop) that a lot of damage comes from tightly integrated components. If one thing breaks or is no longer up to the task, the whole thing has to go. The reason I bought the Precision was that the XPS only had 8GB of RAM, and I needed more so I could run SQL Server and Microsoft Teams at the same time (madness, I know). To mitigate the environmental impact, I bought the Precision second-hand, which raises a different set of issues. Now I had a powerful computer, but I also had a loud, power-hungry, and (yet again) non-modifiable system. This is one tiny peek into the world of throw-it-away consumable technology products.

In my mind, the best way to minimise the environmental damage of our computer use is to make sure everything we buy follows the established standards. They last the longest and can be re-used by interchanging with other things. Take for example the ATX standard. You could buy an ATX case from 1998 and build a modern system in it. In fact, people do that. It has changed that little.

My goal is to have this case and power supply for the next 10 years, and this motherboard for the next 5. I can see a CPU, RAM, and SSD upgrade in the future, but the core platform should last a good long while.